Abstract

Dexterous manipulation is a crucial skill that enables robots to complete complex tasks. Multi-fingered dexterous hands are typically employed to achieve this capability, yet they often face significant perceptual challenges during manipulation due to visual self-occlusion. These challenges are exacerbated when transparent objects are manipulated, as complex properties of light refraction and reflection cause a loss of depth information and introduce depth noise. To address these challenges, this paper proposes TransDex, a 3D visuo-tactile fusion motor policy based on point cloud reconstruction pre-training. Specifically, we first propose a self-supervised point cloud reconstruction pre-training approach based on Transformer. This method accurately recovers the 3D structure of an object from interactive point clouds of dexterous hands, even when random noise and large-scale masking are added. Building on this, TransDex is constructed in which perceptual encoding adopts a fine-grained hierarchical scheme and multi-round attention mechanisms adaptively fuse features of the robotic arm and dexterous hand to enable differentiated motor prediction. Results from transparent object manipulation experiments conducted on a real robotic system demonstrate that TransDex outperforms existing baseline methods. Further analysis validated the generalization capabilities of TransDex and the effectiveness of each of its components.

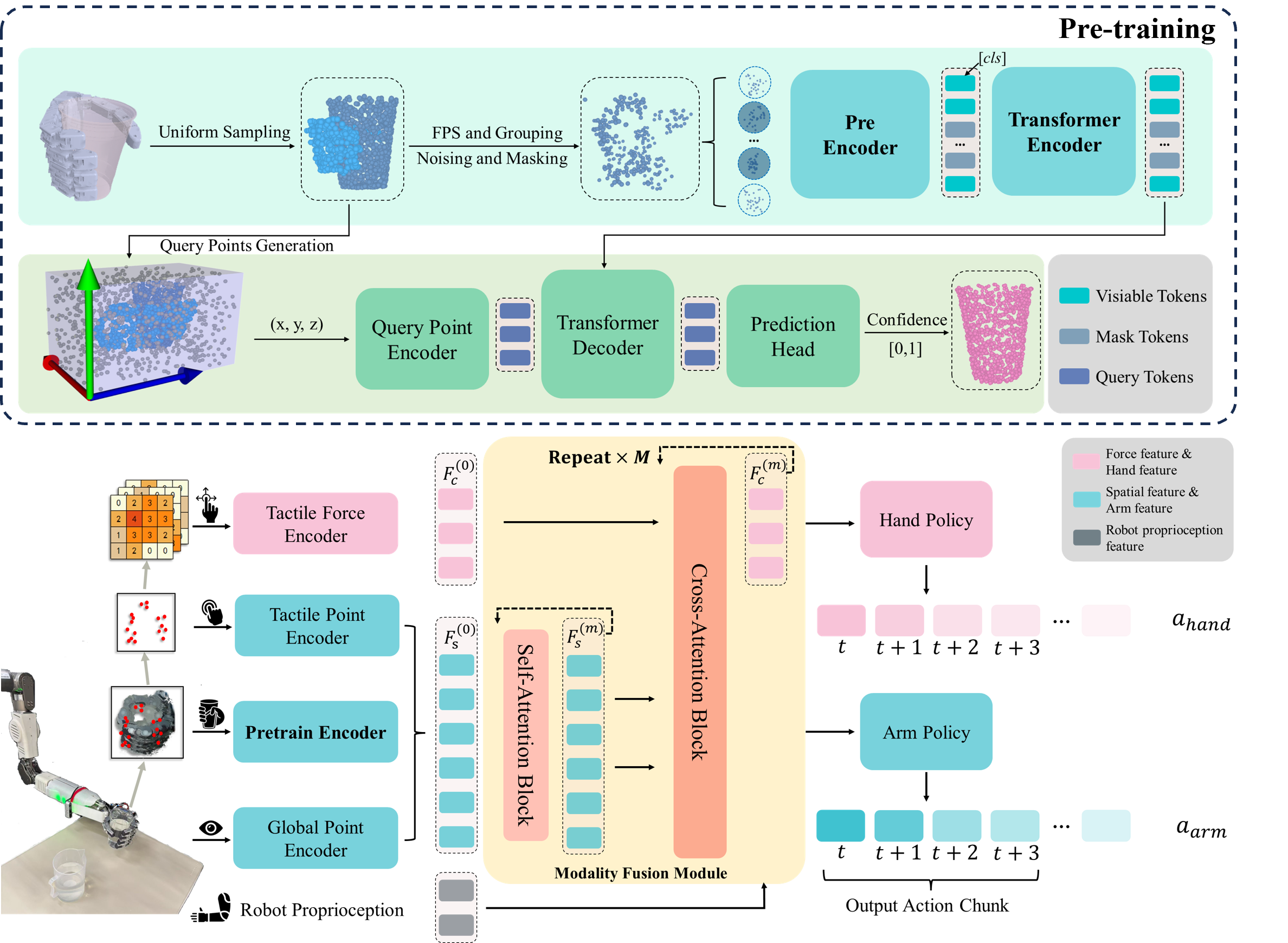

Method Overview

TransDex operates in two phases: (1) The pre-training framework encodes randomly masked and noisy hand object interaction point cloud, and reconstructs the complete object point cloud via a decoder. This process endows the pre-trained network with robust object comprehension against incomplete perceptual scenarios. (2) Building upon the pre-trained network, the motor policy accomplishes the extraction and multi-modal integration of fine-grained visuo-tactile information, ultimately generating differentiated motion predictions for both the robotic arm and dexterous hand.

Experiments

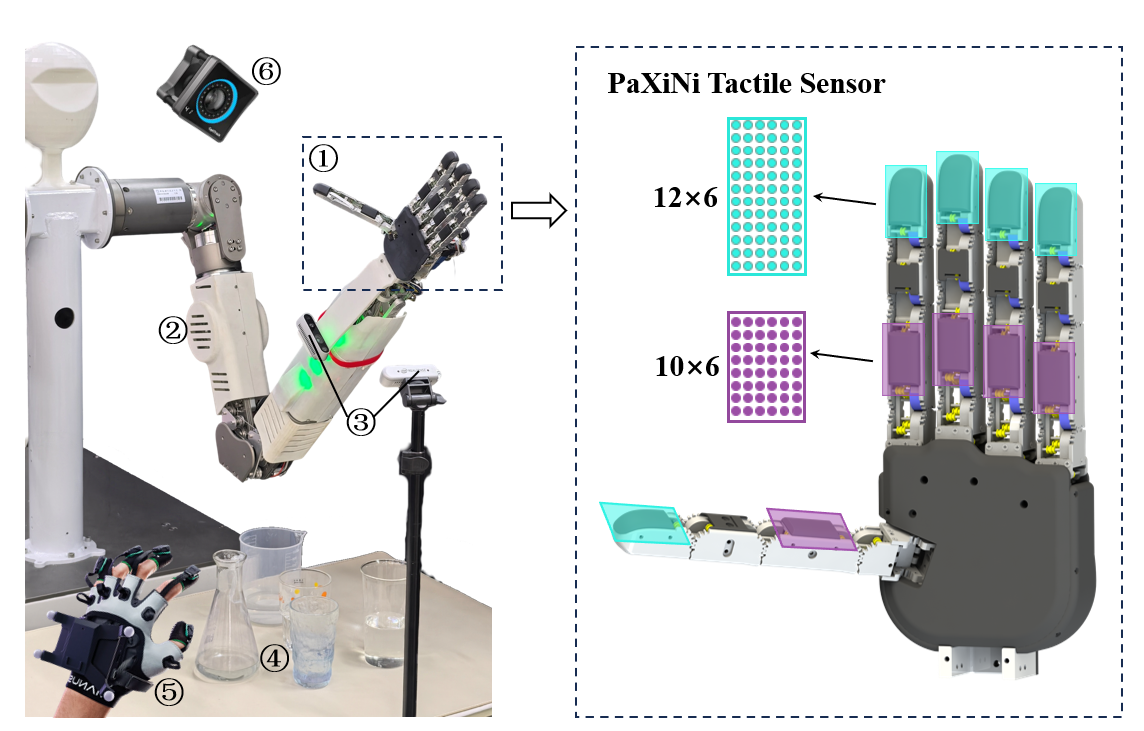

Robotic System Setup: ① a 16-DOF dexterous hand, with array tactile sensors equipped on the fingertips and finger pads; ② a 7-DOF robotic arm; ③ depth cameras; ④ experimental items; ⑤ a data glove; ⑥ a motion capture camera.

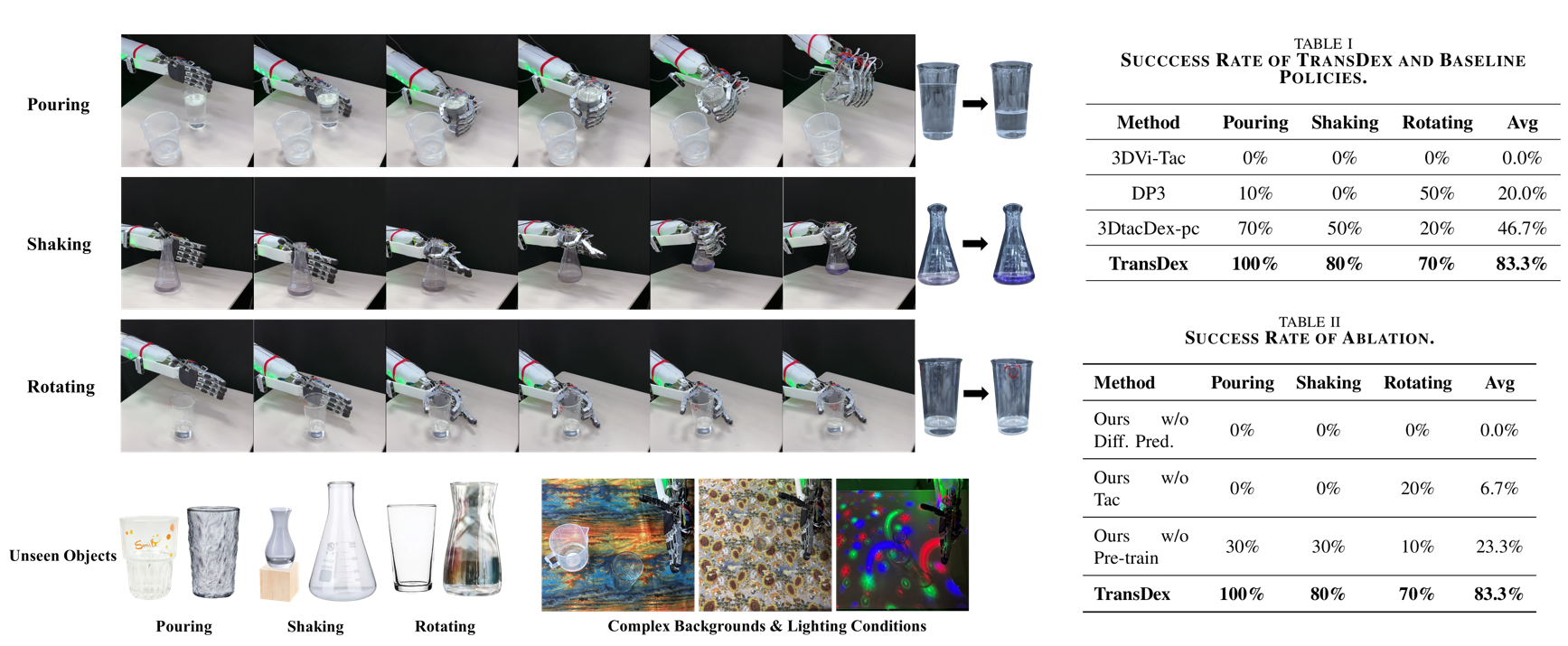

TransDex demonstrates strong capabilities in dexterous manipulation tasks of transparent objects, while also exhibiting strong generalisation and robustness against unseen and complex scenarios.

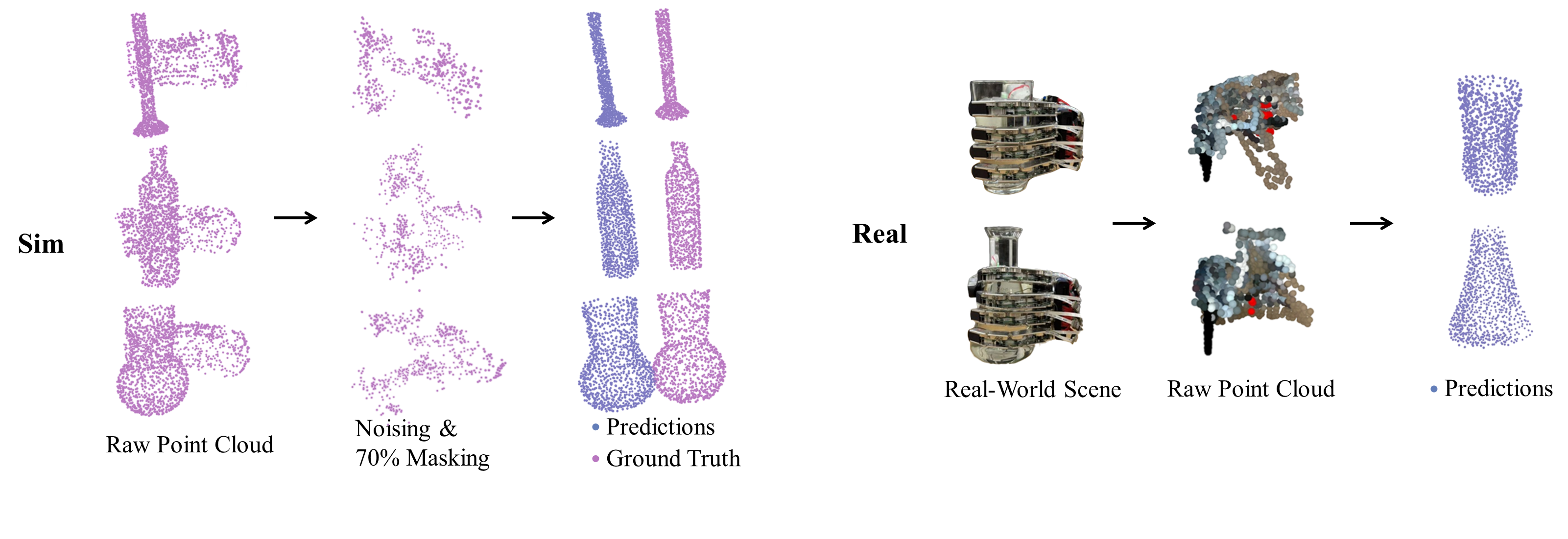

Visualization of point cloud reconstruction performance in pre-training tasks and real-world transfer reconstruction outcomes.

Real-World Demonstrations(3×)

Pouring

Shaking

Rotating

BibTeX

@misc{li2026transdex,

title={TransDex: Pre-training Visuo-Tactile Policy with Point Cloud Reconstruction for Dexterous Manipulation of Transparent Objects},

author={Fengguan Li and Yifan Ma and Chen Qian and Wentao Rao and Weiwei Shang},

year={2026},

eprint={2603.13869},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2603.13869},

}